[ad_1]

Apple has announced a host of accessibility features coming to its devices later this year. Leveraging the power of Apple silicon — along with advances in on-device machine learning and artificial intelligence — users will be able to experience enhanced accessibility across the Apple ecosystem soon. Let’s look at the improvements coming our way.

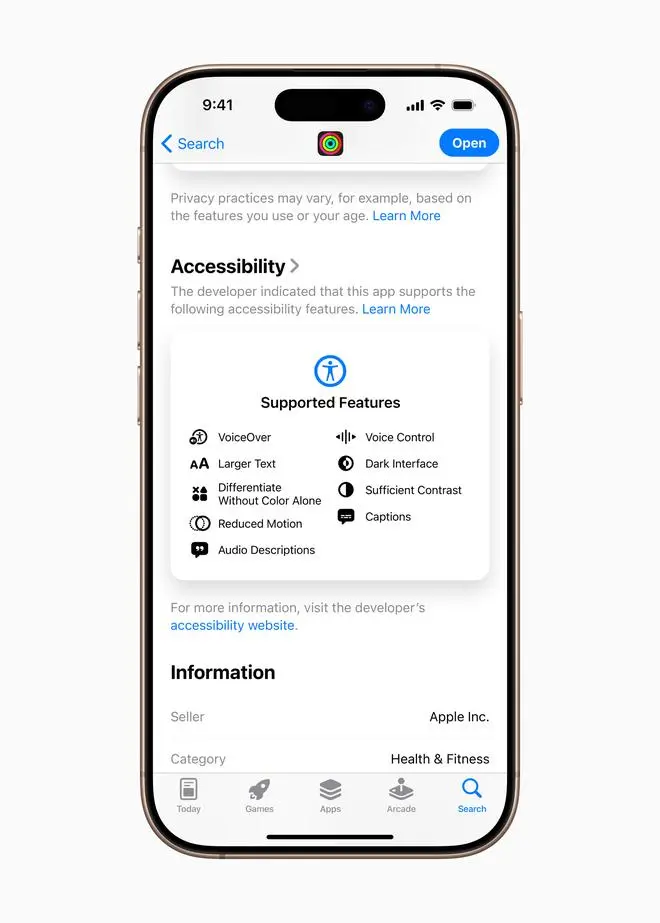

Accessibility Nutrition Labels

Accessibility Nutrition Labels bring a new section to App Store product pages that will highlight accessibility features within apps and games. These labels give users a new way to learn if an app will be accessible to them before they download it, and give developers the opportunity to better inform and educate their users on features their app supports. This includes VoiceOver, Voice Control, Larger Text, Sufficient Contrast, Reduced Motion, captions, and more.

An all-new Magnifier for Mac

Since 2016, Magnifier on iPhone and iPad has given users who are blind or have low vision tools to zoom in, read text, and detect objects around them. This year, Magnifier is coming to Mac to make the physical world more accessible for users with low vision. The Magnifier app for Mac connects to a user’s camera so they can zoom in on their surroundings, such as a screen or whiteboard. Magnifier works with Continuity Camera on iPhone as well as attached USB cameras, and supports reading documents using Desk View.

With multiple live session windows, users can multitask by viewing a presentation with a webcam while simultaneously following along in a book using Desk View. With customised views, users can adjust brightness, contrast, colour filters, and even perspective to make text and images easier to see. Views can also be captured, grouped, and saved to add to later on. Additionally, Magnifier for Mac is integrated with another new accessibility feature, Accessibility Reader, which transforms text from the physical world into a custom legible format.

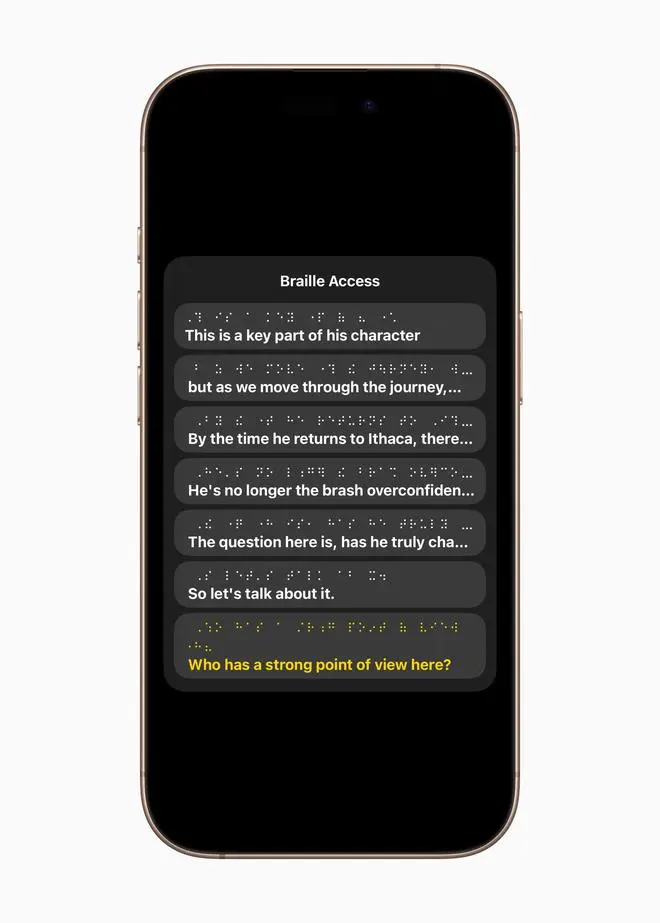

A new Braille experience

Braille Access is an all-new experience that turns iPhone, iPad, Mac, and Apple Vision Pro into a full-featured braille note taker that’s deeply integrated into the Apple ecosystem. With a built-in app launcher, users can easily open any app by typing with Braille Screen Input or a connected braille device. With Braille Access, users can quickly take notes in braille format and perform calculations using Nemeth Braille, a braille code often used in classrooms for math and science. Users can open Braille Ready Format (BRF) files directly from Braille Access, unlocking a wide range of books and files previously created on a braille note-taking device. An integrated form of Live Captions allows users to transcribe conversations directly on braille displays in real time.

Introducing Accessibility Reader

Accessibility Reader is a new systemwide reading mode designed to make text easier to read for users with a wide range of disabilities, such as dyslexia or low vision. Available on iPhone, iPad, Mac, and Apple Vision Pro, Accessibility Reader gives users new ways to customise text and focus on content they want to read, with extensive options for font, colour, and spacing, as well as support for Spoken Content. Accessibility Reader can be launched from any app, and is built into the Magnifier app for iOS, iPadOS, and macOS, so users can interact with text in the real world, like in books or on dining menus.

Live Captions on Apple Watch

For users who are deaf or hard of hearing, Live Listen controls come to Apple Watch with a new set of features, including real-time Live Captions. Live Listen turns iPhone into a remote microphone to stream content directly to AirPods, Made for iPhone hearing aids, or Beats headphones. When a session is active on iPhone, users can view Live Captions of what their iPhone hears on a paired Apple Watch while listening along to the audio. Apple Watch serves as a remote control to start or stop Live Listen sessions, or jump back in a session to capture something that may have been missed. With Apple Watch, Live Listen sessions can be controlled from across the room, so there’s no need to get up in the middle of a meeting or during class. Live Listen can be used along with hearing health features available on AirPods Pro 2, including the first-of-its-kind clinical-grade Hearing Aid feature.

Enhanced View with Apple Vision Pro

For users who are blind or have low vision, visionOS will expand vision accessibility features using the advanced camera system on Apple Vision Pro. With powerful updates to Zoom, users can magnify everything in view, including their surroundings, using the main camera. For VoiceOver users, Live Recognition in visionOS uses on-device machine learning to describe surroundings, find objects, read documents, and more.

Additional updates

Background Sounds becomes easier to personalise with new EQ settings, the option to stop automatically after a period of time, and new actions for automations in Shortcuts. Background Sounds can help minimise distractions to increase a sense of focus and relaxation, which some users find can help with symptoms of tinnitus.

For users at risk of losing their ability to speak, Personal Voice becomes faster, easier, and more powerful than ever, leveraging advances in on-device machine learning and artificial intelligence to create a smoother, more natural-sounding voice in less than a minute, using only 10 recorded phrases.

Vehicle Motion Cues, which can help reduce motion sickness when riding in a moving vehicle, comes to Mac, along with new ways to customise the animated onscreen dots on iPhone, iPad, and Mac.

Eye tracking users on iPhone and iPad will now have the option to use a switch or dwell to make selections. Keyboard typing when using Eye tracking or Switch Control is now easier on iPhone, iPad, and Apple Vision Pro with improvements including a new keyboard dwell timer, reduced steps when typing with switches, and enabling QuickPath for iPhone and Vision Pro.

For users with severe mobility disabilities, iOS, iPadOS, and visionOS will add a new protocol to support Switch Control for Brain Computer Interfaces (BCIs), an emerging technology that allows users to control their device without physical movement.

Sound Recognition also adds Name Recognition, a new way for users who are deaf or hard of hearing to know when their name is being called.

Published on May 14, 2025

[ad_2]

Source link